Artificial Intelligence

Businesses across all sectors are seeking to embrace and harness AI's disruptive potential, whether that is to augment and streamline existing processes, or to build new products which leverage powerful AI models.

Implementing, developing and managing AI within your business in a safe and compliant way involves navigating an increasingly complex landscape which touches on numerous legal areas.

Our AI practice brings together experts from all legal disciplines enabling us to provide a cross-practice and cross-sector holistic approach.

Five principles. One smarter approach.

Our legal experts share TLT’s approach to responsible innovation in this short-form video series, each focused on one of our five guiding principles.

1. Govern the use of intelligent systems across your organisation

2. Assess risks and opportunities across new and existing use cases

3. Comply with fast-changing laws and regulations

4. Transact with technology providers and deliver solutions to customers

5. Protect your investments, assets and outputs

Watch the series below:

Experience

Contacts

Discover our AI toolkit

Related insights & events

AI chatbots and competition law: A look into the Meta WhatsApp antitrust investigations

AI in Motion - How to make sustainable AI a reality

Agentic commerce - The next legal frontier in AI-powered shopping

AI sovereignty and the Intel precedent - How governments are redefining technology control

Making digital regulation work - a framework for digital regulation compliance

Getty Images v Stability AI: Retail Sector Impact | TLT

AI and the future of payments: Five Big Questions with Dave Gardner

Agentic AI: A Short Introduction and Key Legal Considerations

AI and financial crime - five big questions with Ben Cooper

When AI shops for you - Redefining the payments journey

Agentic AI and Data - Five big questions with Emma Erskine-Fox

Generative AI and workforce transformation - 5 big questions with Sarah Skeen

TLT Partner appointed as Co-Chair of major UK anti-fraud coalition

TLT advises owners of leading waste recycling and specialist vehicle services operator on sale to major UK waste management services business

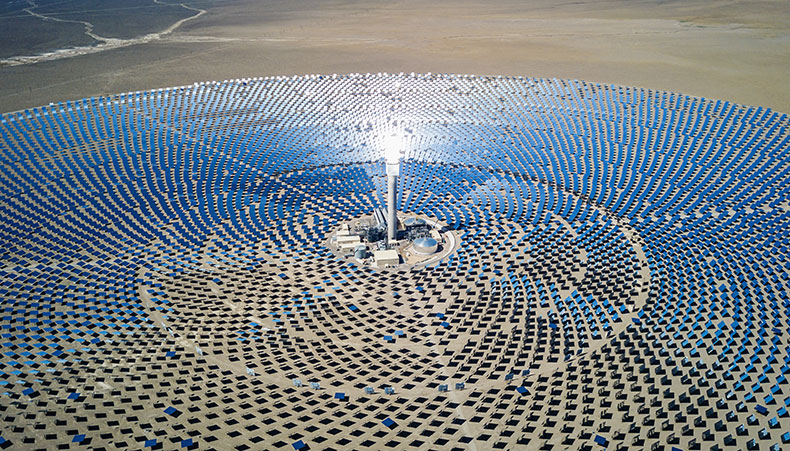

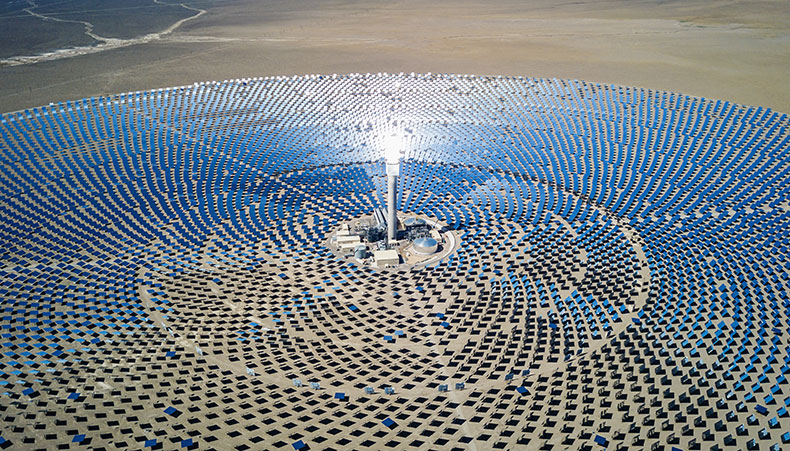

TLT advises leading developer and independent power producer on major solar and battery portfolio project financing

TLT strengthens national real estate offering with strategic partner hire trio

TLT advises CCI Global on landmark strategic merger with Startek

TLT advises award-winning accountancy firm on acquisition of Green Square

TLT advises on the sale of cloud optimisation specialists

Wellbeing at TLT – a conversation with Claire Graham, Emma Erskine-Fox and Chantal Stroker

TLT trifecta as The Legal Business Awards 2026 shortlist announced

TLT advises on strategic refinancing of BRCK Group PLC

TLT advises clean energy developer on renewable energy park investment

TLT continues to invest in Real Estate with appointment of new partner

TLT awarded Stonewall accreditation for LGBTQ+ inclusive culture

TLT shortlisted for ‘UK Firm of the Year’ at The Lawyer Awards 2026

The Balancing Act: Regeneration beyond the contract

Energise2030: Developing energy projects for the long-term

The Balancing Act: Partnerships, trust and patient capital

ESG in Action: Inside the Government Legal Department’s social mobility agenda

ESG in Action: Climate resilience in real terms: Breaking sustainability silos with Santander

ESG in Action: Hospitality and sustainability working together for future successes

ESG in Action: Beyond returns: Inside the world of responsible investment

ESG in Action: Trailblazing with Ablaze: Helping young people succeed

ESG in Action: Power from the panels: Profit with purpose with Eden Sustainable

The Balancing Act: Setting the scene for regeneration

ESG in Action: Top-down and bottom-up momentum: The next chapter of social mobility with SMBP

ESG in Action: Balancing the basket with the British Retail Consortium

Energise2030: Grid reform, Gate 2 and what's next for connections

ESG in Action: Banking on biodiversity with Nationwide Building Society

Knocking down barriers to on-site generation: A practical guide to behind the meter solar for your business

AI in motion: Balanced algorithms - addressing bias in AI

When good tax decisions go wrong: Governance, filing positions and HMRC scrutiny - Webinar

SMConnect Webinars: Practical Insight for leaders in SMCR roles

Biodiversity Net Gain: What’s changing and what it means for you

DMCC Act - The next chapter for CMA Consumer Law Enforcement

International Fintech Case Study: Brexit Contract Migration | TLT

UK Utilities Case Study: Employment Law and Brexit Planning | TLT

TLT oversees an international acquisition of a specialised South West business

TLT advises on the £90m sale of long-standing client's business

Advising a fast-growth eCommerce consultancy on a share capital sale and reinvestment

Bitesize ERA - Episode 6: Delivering change under the ERA reforms

Preparing for the Procurement Act 2023 - construction industry focus

Community, connection and collaboration - TLT and Forest Green Rovers FC